|

Elaborating on this fact, one can actually add points to the data set without influencing the hyperplane, as long as these points lie outside of the margin.

These points support the hyperplane in the sense that they contain all the required information to compute the hyperplane: removing other points does not change the optimal separating hyperplane. In particular, it gives rise to the so-called support vectors which are the data points lying on the margin boundary of the hyperplane. The optimal separating hyperplane is one of the core ideas behind the support vector machines. The optimal separating hyperplane should not be confused with the optimal classifier known as the Bayes classifier: the Bayes classifier is the best classifier for a given problem, independently of the available data but unattainable in practice, whereas the optimal separating hyperplane is only the best linear classifier one can produce given a particular data set. As a consequence, the larger the margin is, the less likely the points are to fall on the wrong side of the hyperplane.įinding the optimal separating hyperplane can be formulated as a convex quadratic programming problem, which can be solved with well-known techniques. Thus, if the separating hyperplane is far away from the data points, previously unseen test points will most likely fall far away from the hyperplane or in the margin. New test points are drawn according to the same distribution as the training data. The idea behind the optimality of this classifier can be illustrated as follows.

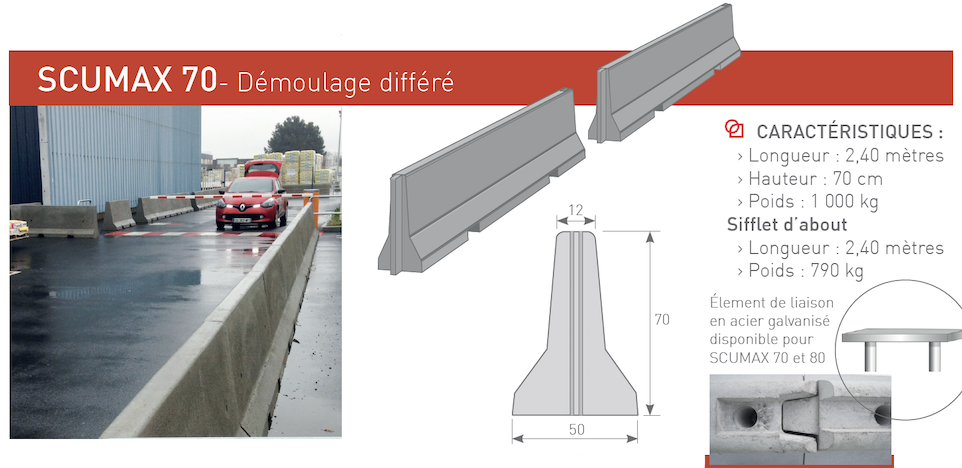

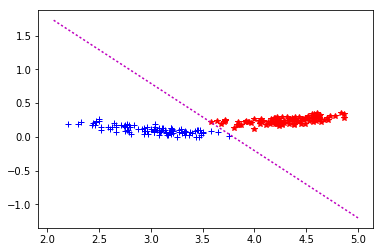

In this respect, it is said to be the hyperplane that maximizes the margin, defined as the distance from the hyperplane to the closest data point. In a binary classification problem, given a linearly separable data set, the optimal separating hyperplane is the one that correctly classifies all the data while being farthest away from the data points. now if you try to plot it in 1D you will end up with the whole line "filled" with your hyperplane, because no matter where you place a line in 3D, projection of the 2D plane on this line will fill it up! The only other possibility is that the line is perpendicular and then projection is a single point the same applies here - if you try to project 49 dimensional hyperplane onto 3D you will end up with the whole screen "black").The optimal separating hyperplane and the margin In words. Exactly no pixel would be left "outside" (think about it in this terms - if you have 3D space and hyperplane inside, this will be 2D plane. However this only shows points projetions and their classification - you cannot see the hyperplane, because it is highly dimensional object (in your case 49 dimensional) and in such projection this hyperplane would be. Pred <- predict(svm, )įor more examples you can refer to project website

# We can pass either formula or explicitly X and Y # We will perform basic classification on breast cancer dataset Simply train svm and plot it forcing "pca" visualization, like here. If you are not familiar with underlying linear algebra you can simply use gmum.r package which does this for you. You can obviously take a look at some slices (select 3 features, or main components of PCA projections). With 50 features you are left with statistical analysis, no actual visual inspection. In order to plot 2D/3D decision boundary your data has to be 2D/3D (2 or 3 features, which is exactly what is happening in the link provided - they only have 3 features so they can plot all of them). The only thing you can do are some rough approximations, reductions and projections, but none of these can actually represent what is happening inside.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed